What is Intelligence? Layers of Emergence

How intelligence arises from nature, one layer at a time

The Trouble with Definitions

“The Tao that can be named is not the eternal Tao.” — Lao Tzu

As a mathematician, I’ve long sought clean definitions. Much of my work involves building precise frameworks — starting by defining key concepts, isolating the core of a problem, formalizing it, and tracing its implications to their logical end.

Yet over time, I’ve come to see not just the limits of definitions, but their quiet distortions—the way they can flatten nuance in the name of clarity. The richness of a living idea gets traded for the sterile comfort of formal neatness. Sometimes, defining isn’t just clarifying — it’s an act of power: shaping perception, and granting authority to the one who defines.

Few ideas reveal this tension more vividly than intelligence. We talk about it as if we know what it is — a score, a skill, a spark. But what is it, really? And can something so dynamic ever be pinned down?

I think of intelligence not as a fixed trait, but as an experience — not unlike beauty — arising in context, felt through interaction. So while we try to define intelligence — because we must — to witness it, to live with it, or to build systems that move with it, we need something else: humility. An attention to context. A willingness to recognize that intelligence, like beauty, is often messy, partial, plural, heuristic, and still astonishingly effective.

But even our capacity to see intelligence is shaped by history. In The Myth of Superintelligence, I argued that our attempts to define intelligence are never neutral. They reflect what we choose to measure, optimize, and reward. This essay is not a repetition of that critique. It is a step back. A shift in lens. It asks not what intelligence is, but when and how it arises—not as a trait, but as something unfolding across time, scale, and structure.

Because the power to define has always been the power to exclude. Colonial systems didn’t just extract labor and land—they imposed ways of seeing. In doing so, they dismissed the intelligence embedded in other ways of knowing, reframing rich knowledge traditions as myth or superstition. These distortions still echo in how we define and measure intelligence today. African polyrhythms were labeled primitive. Classical Indian music was exoticized or ignored. Indigenous knowledge systems—deeply attuned to land, season, and cycle—were reduced to folklore. Intelligence was there. But the lens refused to see it.

This is why any inquiry into intelligence must also be an inquiry into perspective. Definitions don’t just clarify. They constrain. They shape not only what we see, but what we believe intelligence can be.

This series is an attempt to widen the lens—to trace intelligence not as a fixed trait, but as a dynamic unfolding across layers of complexity. We begin with the silent elegance of physical systems, where matter flows under law, solving problems through coherence and constraint. From there, we enter the domain of evolution, where life adapts through variation and feedback, accumulating structure over time. We then move to the responsive intelligence of behavior—organisms without minds that nonetheless solve, coordinate, and learn through interaction.

But these are just the foundations. In the second half, we abstract upward: tracing how intelligence evolves the ability to frame problems, to reflect on and revise its own rules, and finally, to orient itself—to choose what matters. This is where intelligence becomes recursive, contextual, and ultimately, meaningful. Not just a solver of problems, but a seeker of value.

Intelligence from Optimization: Physical Systems

How physical systems solve problems through optimization under constraints.

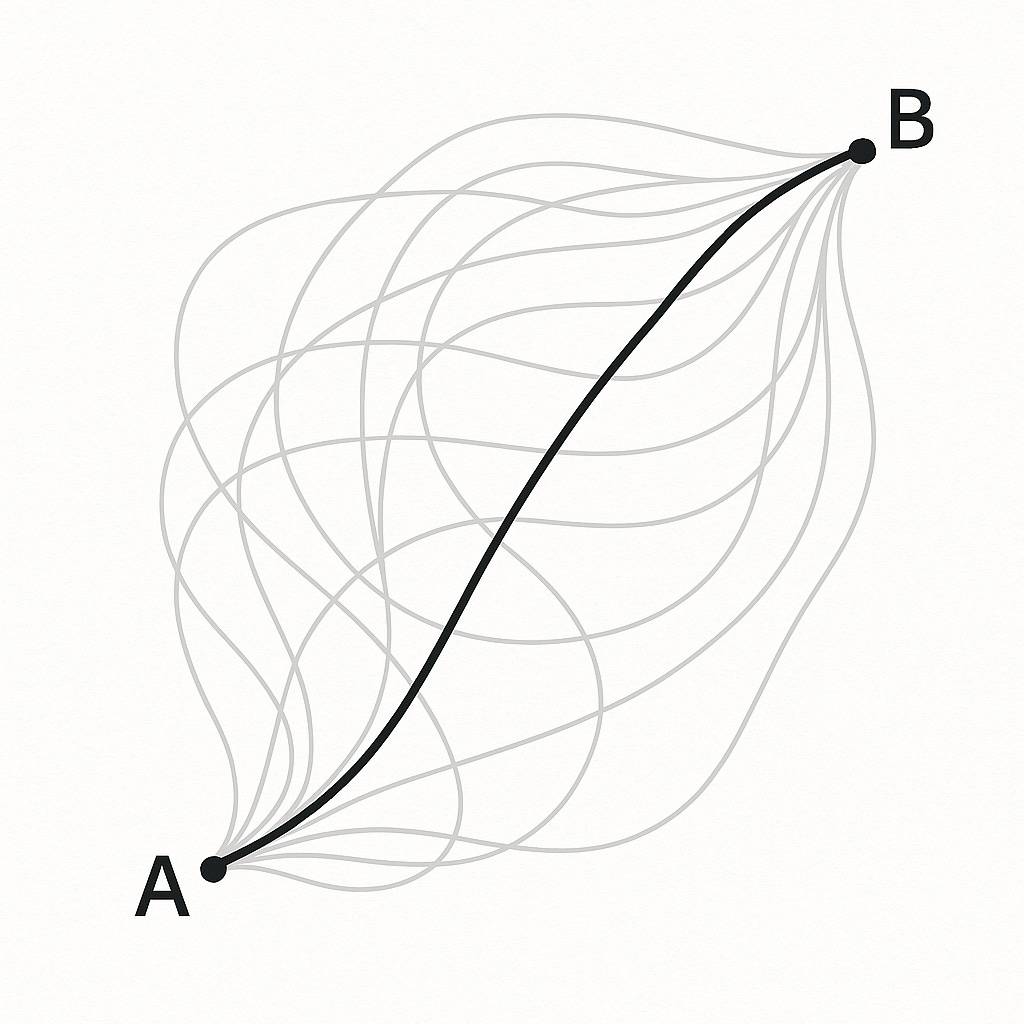

Imagine a particle moving from point A to point B. At first glance, it seems simple: something pushes it, and it goes. But physics tells a deeper story.

The particle doesn’t just take any path. It has an infinite number of possible trajectories it could follow between those two points—curved, jagged, looping. And yet, it takes one particular path: the one that minimizes a quantity known as action.

Figure 1. Illustrating the principle of least action in physics: a particle moving from point A to point B doesn't take any random path, but the one that minimizes 'action' – the most efficient route allowed by physical laws, reflecting a form of optimization.

What is action? Roughly, it’s a way of measuring how energy is used over time. Different paths use energy differently. Some are more efficient than others. According to classical mechanics, a particle always follows the path that minimizes action—the most efficient route allowed by the laws that govern it.

It’s not thinking. It’s not aware. But its motion reflects a principle of optimization. It “solves” a problem simply by existing under constraint.

This theme recurs throughout physics. In optics, a photon bends through glass along the path that takes the least time—a principle known as Fermat’s Principle. In general relativity, gravity curves spacetime, and objects follow geodesics—the straightest possible paths in that curved geometry. In quantum mechanics, as reformulated by Feynman, a particle doesn’t take a single path—it takes all possible paths at once. Each path contributes with a phase determined by its action, and through interference, some paths cancel while others reinforce. What emerges is not a chosen trajectory, but a distribution shaped by coherence.

A photon doesn’t weigh options or change its mind. It can’t act like an electron, just as it can’t travel a different route depending on mood. Its path is shaped by what it is and by the rules it obeys. The behavior that results isn’t random or rigid—it’s elegant, precise, and efficient.

This is where the confusion begins. We often associate intelligence with choice, with planning, strategy, and adaptation. But here, coherence arises through structure alone, without deliberation.

And this kind of optimization—finding what works under constraint—also underlies many modern AI systems. The system doesn’t choose. Yet it behaves intelligently—because it is shaped by rules that minimize cost, error, or action

It’s important to remember that these rules are not written into the cosmos like a blueprint. They are human discoveries—conjectures built from centuries of observation and refinement. We didn’t program the universe. We decoded it—or at least approximated its deep regularities. And what we found was startling: even without minds, matter behaves as if it is optimizing or computing.

If intelligence begins anywhere, it is here—a kind of primitive intelligence. A capacity to move well under constraint, to yield coherence from structure, to appear purposeful without awareness.

This kind of intelligence is embedded, not engineered. The system follows fixed rules, but how those rules unfold depends on the medium they act upon. A photon bends in glass, slows in water, and travels straight in a vacuum. The law is the same. The outcome depends on the medium. The intelligence is not in the rule alone, nor in the substrate—it’s in their interaction.

It lacks memory or foresight. But it feels intelligent. It flows, it fits, it solves—through structure alone.

Physical systems move beautifully under constraint. But they do not learn. They do not remember.

Evolution changes that.

Intelligence from Search: Evolutionary Systems

How evolution shapes form through survival pressure and feedback.

From the laws of physics, matter began to take shape. As the universe cooled, particles formed atoms, and atoms formed stars, planets, and—somehow—life. We do not yet know how life began. But once it did, something remarkable followed.

One influential theory offers a glimpse of that earliest threshold. In 1967, Nobel Laureate Manfred Eigen proposed the idea of a hypercycle: a closed loop of molecular replicators, each catalyzing the next. Though speculative, it suggested how cooperation and feedback might emerge even in prebiotic chemistry. Instead of molecules competing in isolation, the system reinforces itself—a distributed structure more stable than any part alone. In this view, complexity may have begun not with competition, but with mutual reinforcement: the first echo of a system building on itself.

However it began—through chemistry, cooperation, or chance—life brought with it the capacity to change. It adapted. It accumulated structure in response to pressure.

That process is evolution.

Imagine a population of organisms, each slightly different from the next. Some move faster. Some blend into their surroundings. Some leave more offspring. These differences arise through mutation, recombination, and noise—random variations passed down through generations.

Now, place that population in an environment. Food is scarce. Temperatures shift. Predators lurk. Some traits help survival; others don’t. Those that help get passed on. Slowly, the population shifts, fanning out across a vast adaptive landscape, where peaks mark local fitness and valleys mark failure. The environment doesn’t design the outcome. It shapes it. This was Darwin’s radical insight: that form can follow function without foresight.

Evolution operates through a simple, iterative loop:

Variation: Individuals differ due to mutation, recombination, or chance.

Inheritance: Some of those differences are passed to the next generation.

Selection: In a given environment, some traits lead to greater reproductive success.

Figure 2. Visualizing evolution's “search” process: Organisms, like those shown on this fitness landscape, gradually adapt and move across generations towards optimal survival under environmental pressures.

Together, these forces produce change. It does not happen instantly or uniformly. But over time, life begins to reflect its environment. A fish’s shape reflects the drag of water. A cactus holds the memory of drought. Across generations, a population becomes a mirror to its constraints—its genome an archive of pressures and adaptations.

Intelligence, in this view, is not housed in individuals. It emerges across time. No creature solves the problem, but the lineage does.

Later thinkers gave this process a mathematical form. R. A. Fisher demonstrated that the speed of adaptation depends on genetic variance, as if nature were climbing a statistical gradient. Sewall Wright imagined rugged fitness landscapes—hills, valleys, and shifting terrain—where evolution behaves like a local search process, blind but persistent.

These ideas later shaped modern algorithms. Genetic algorithms and evolutionary strategies borrow the same principles: variation, selection, and retention. They adapt without foresight. They search without knowing the goal in advance.

This is not cognition. There are no thoughts. But there is direction. Intelligence here means the emergence of structure under pressure. A dynamic fit between organism and world, sculpted by survival.

The laws of evolution, like those of physics, are not prescriptions. They are patterns we’ve uncovered—regularities that emerge when certain conditions hold. Where variation, inheritance, and selection operate, adaptation unfolds through cumulative change.

If intelligence deepens anywhere, it is here—an adaptive intelligence. A capacity to shape form through pressure, to accumulate fit through feedback, to build without foresight.

Next, we turn to systems that don’t wait generations to change—systems that solve in real time, that act, sense, and coordinate. Life without minds. Behavior before thought.

Intelligence from Response: Collective Systems

How organisms act, sense, and coordinate—without thought or plan.

If evolution sculpts across generations, behavior adapts within one. Intelligence here takes the form of response—real-time adjustment to the environment.

Take Physarum polycephalum, the slime mold—a single-celled organism with no central nervous system. It looks like yellow ooze. Yet, when placed in a maze, it finds the shortest path to food. Given multiple nutrient sources, it builds efficient transport networks, approximating solutions humans design with algorithms. Given a challenge, it responds.

How?

The answer lies in structure and feedback.

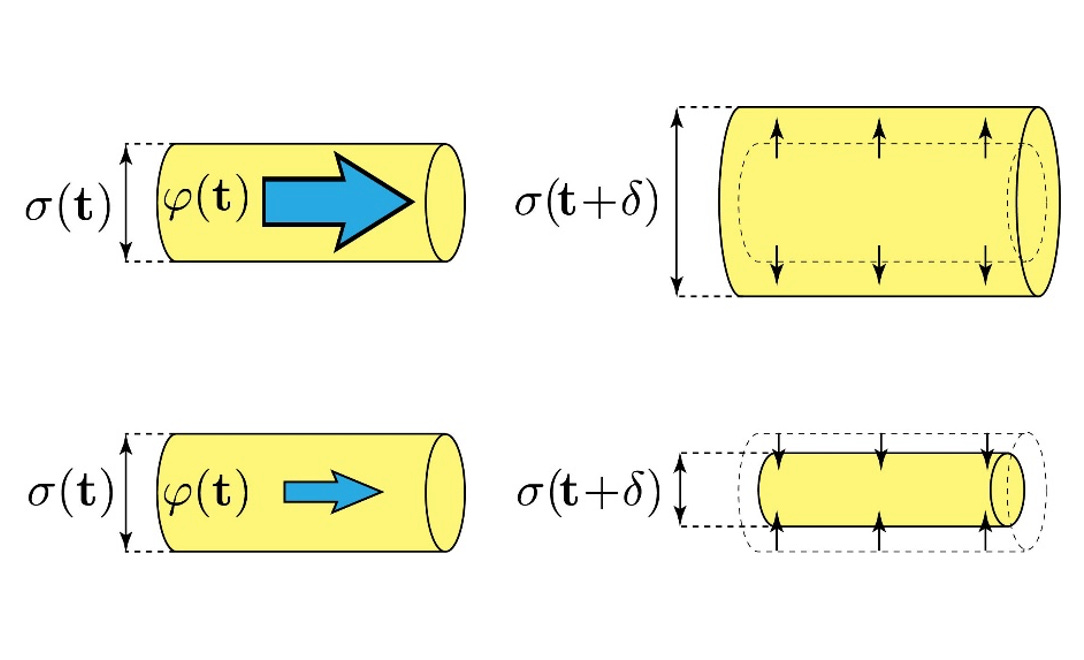

Slime mold is a living network: a web of tubular veins that transport nutrients. Each tube’s diameter determines how much cytoplasmic fluid flows through it, but critically, the flow also affects the tube’s future diameter. Tubes with higher flow expand; those with lower flow shrink and disappear. This feedback loop dynamically reorganizes the network.

Figure 3. Illustrating the core feedback mechanism of slime mold intelligence: tubes expand when flow is high and contract when low, allowing the network to self-organize and find efficient paths without central planning.

The mold doesn’t “know” the optimal route. It extends its network broadly, then reinforces the paths where more nutrients flow. Less efficient paths are pruned. The result approximates an optimal configuration through physical adaptation.

This isn’t trial-and-error in the usual sense, but a form of distributed, local adaptation. There’s no central planner. Each part of the organism responds to local conditions. A photon follows the path of least time, but it cannot change its form. Evolution changes form, but across generations. The slime mold does something different: it reshapes itself in real time. It solves spatial problems not by selecting from fixed options, but by altering its own structure as it moves.

This is morphological intelligence—computation through structure and form rather than symbols or plans.

In fact, this behavior is not just metaphorically similar to known algorithms. In my work, I’ve shown that it approximates a version of Iteratively Reweighted Least Squares (IRLS), an algorithm used to solve certain optimization problems. The slime mold’s decentralized feedback loop—reinforcing high-flow paths and suppressing others—mirrors IRLS’s core mechanism. What nature performs through matter and motion, we later formalized in mathematics. The intelligence lies not in symbols, but in embodiment.

This marks a striking shift. Unlike evolution, which operates across generations, this system adapts within one. Intelligence here arises over time, as the organism reshapes itself in response to flow, obstacles, and rewards.

And this form of intelligence—distributed, emergent, adaptive—is not unique to slime molds.

Ants coordinate through pheromone trails, reinforcing paths that lead to food and abandoning those that don’t. Bees communicate direction and distance through intricate dances, allowing the hive to act on collective memory. Flocks of birds, schools of fish, and herds of mammals show fluid, synchronized motion—all without a central commander. Each individual reacts to its neighbors using simple rules of alignment, attraction, and avoidance.

Some of these creatures have tiny brains; others, like slime molds, possess no brain at all. Yet across all of them, intelligence emerges not from central planning, but from local interaction—from the feedback loops between agents and their environments.

These systems remind us: intelligence doesn’t need a brain.

Here, the world teaches. Learning is not internal but embodied—in movement, form, and flow. No blueprint. Just feedback and iteration. The system reshapes itself in response and, in that reshaping, learns.

This is intelligence before thought.

We’ve seen systems solve without planning and adapt without thought. What lies beneath this?

A Shared Architecture: Rules, Substrate, and Emergence

Uncovering the common structure behind physical, biological, and behavioral intelligence.

Strip them to their essence, and a shared structure appears:

a rule that drives change, a substrate that responds, and a form that emerges from their interaction.

Let’s make this architecture explicit:

1. Physics

Rule: Physical laws like the principle of least action—particles move to minimize effort or time.

Substrate: Matter—particles, light, fields.

Emergence: Efficient motion. A photon bends through glass, taking the fastest route. No plan, just lawful behavior playing out in a responsive medium.

2. Evolution

Rule: Natural selection—traits that improve survival and reproduction get passed on.

Substrate: Populations of organisms, with variation and inheritance.

Emergence: Adaptive form. Over generations, wings evolve to glide, eyes to focus, and cooperation to scale. There is no designer—just selection shaping form through time.

3. Slime Mold

Rule: Local feedback—tubes grow where flow is high, shrink where it’s low.

Substrate: A living, flexible network of veins.

Emergence: Efficient paths. The mold spreads out, reinforces what works, and prunes what doesn’t—solving spatial problems without a brain.

In each case, we see intelligence-like behavior arise not from central control, but from structure and feedback.

This is the basic architecture behind many forms of intelligence.

We see it in modern AI as well. A learning rule (like gradient descent), a computational substrate (neural networks on hardware), and a task environment (data, feedback). What emerges is not understanding, but performance—solutions shaped by constraint and refined through iteration.

But the emergence described here happens within boundaries. Before anything can be optimized, something must decide what counts as better. That, too, is part of the apparatus that gives rise to intelligence.

Intelligence from Framing

Why optimization depends on what we choose to value.

So far, we’ve seen how intelligent behavior can emerge from the interplay of rules, responsive media, and feedback, without a planner, without intent. But intelligence is not only about how behavior emerges. It’s also about what counts as success and who decides. In this section, we go one layer deeper: from systems that solve problems to systems that redefine the problem itself.

Even the most elegant flows assume a frame.

A photon minimizes time. A particle minimizes action. But why that quantity, and not another?

Optimization always begins with a choice—what to maximize, what to minimize. That choice isn’t neutral. It encodes a judgment, shaped by context, constraint, and perspective.

In evolution, we say the goal is reproductive success, but only because that’s what gets reinforced. Why survival, and not symmetry? Why adaptability and not complexity? Fitness itself is a product of the environment and the pressures it imposes. Evolution doesn’t just search a landscape—it redefines what counts as high ground as it moves.

Even the slime mold’s adaptation reflects a frame. Flow reinforces structure, but what counts as “useful flow” depends on external gradients, internal dynamics, and task geometry. There is no central arbiter—just a loop that amplifies what works, shaped by context.

This intrinsic link between frame and meaning applies across scales. It clarifies why comparisons like “Is a bird smarter than a plane?” misfire. Planes are engineered for performance. Birds are shaped by survival pressure. Their flight encodes trade-offs we do not face. It’s not more optimal in the abstract—it’s more intelligent in context. In space, where gravity is faint and air vanishes, flight ceases to matter. The challenge dissolves—and so does the meaning of the solution. Intelligence requires friction. Constraint gives rise to relevance.

And this is where intelligence deepens: from solving problems under fixed rules to the capacity to reshape the rules themselves. From emergence to emergence with memory, with feedback, with orientation.

The triad—rule, substrate, emergence, is not static. It can stack. It can loop. It can reshape itself.

This is where recursion begins.

Intelligence from Recursion

How intelligence deepens by rethinking rules, goals, and relevance.

At the most immediate level, a system selects the best path under a fixed rule.

Next, it selects the best rule for a given goal.

Beyond that, it evaluates which goals are worth pursuing.

And further still, it questions the framing itself.

Each layer defines the optimization landscape for the layer below. The criteria of success are not static—they are chosen, shaped, or inherited. When those criteria become subject to change, we begin to see recursive intelligence.

Even in physics, optimization occurs within a frame, but that frame is fixed. A particle minimizes action, but “action” is defined by immutable constants: the speed of light, Planck’s constant, and the structure of spacetime itself. Change those constants, and the optimal path would change too. The behavior remains lawful, but the law depends on the frame. Even at this most fundamental level, optimization presupposes a definition of what matters—what to minimize, what to preserve.

In evolution, the layering is even clearer. Organisms evolve to maximize reproductive success, but what counts as “fit” is contextual. Camouflage may be useful in one niche, cooperation in another. Evolution doesn’t just optimize on a landscape—it helps shape the landscape itself.

This recursive quality changes what we mean by intelligence. It’s not just the ability to optimize or adapt, but to reframe the space of goals, rules, and relevance.

And when a system can not only navigate a landscape, but rethink the landscape itself, it crosses a threshold. It gains more than adaptability—it begins to exercise judgment.

This is the recursive depth of intelligence: the ability to transform problems, not just solve them. To ask what matters before acting. To orient, not merely optimize.

But even recursion has its limits. A system might redefine goals or shift frames—but to what end? At some point, intelligence must confront not just structure, but meaning. It must not only reframe, but also care.

Intelligence from Orientation

Why meaning—and not just simulation—marks the threshold of mind.

At the far end of abstraction lies a machine unlike the others we’ve seen: the Universal Turing Machine.

Proposed by Alan Turing in the 1930s, it was one of the most profound ideas in the history of computation. A Turing machine is a theoretical device that reads and writes symbols on an infinite tape, one cell at a time, using a fixed set of rules. But Turing’s deeper insight was that a single machine—a universal one—could simulate the behavior of any other Turing machine, simply by reading a description of that machine from its tape.

In this setup, the tape holds both data and instructions, not unlike modern computers. The program is just another form of input. The “head” of the machine moves across the tape, reading symbols and executing instructions one by one. In effect, it can emulate any computable process—mathematics, simulations, even language generation.

It is a machine that can simulate anything, so long as it is told exactly how.

But in that generality lies a revealing limitation:

It can do anything—and yet it wants nothing.

It has no goals, no values, and no preference for one program over another. It simulates, but it cannot select. It executes, but it does not orient.

And therein lies a crucial distinction

This absence of orientation—the lack of care—marks a limit that technical capability alone cannot cross.

In 1930, two Nobel laureates—Albert Einstein and Rabindranath Tagore—met in Berlin for a conversation that would echo far beyond their time.

Einstein asked, “Do you believe in the divine as isolated from the world?”

“Not isolated,” Tagore replied. “The infinite personality of man comprehends the universe. There cannot be any other truth. This is the foundation of all scientific truth.”

Einstein pushed further: “Then I am more religious than you are!”

To which Tagore responded:

“The truth of the universe is human truth. It is through human consciousness that the universe has meaning.”

Tagore wasn’t denying reality—he was reframing it. Intelligence, for him, wasn’t just alignment with external truth; it was the act of giving meaning, of making experience coherent.

This matters deeply today, as we build systems that simulate reasoning without ever asking what is worth reasoning about. AI may replicate form, but not this function—the capacity to care, to orient, to confer meaning.

Their exchange captured a deep tension that still haunts our understanding of intelligence. Is it the discovery of objective truths? Or the participation in meaning? Is it simulation or selection? Processing or perspective?

That’s the distinction the Universal Turing Machine reveals. It can simulate any process, but it cannot care. It does not ask what is worth doing—only what can be done.

And that distinction is not peripheral. It is essential.

Because when intelligence gains the ability to reflect, to reorient, and to choose what to care about, it no longer merely simulates—it begins to signify. It doesn’t just process information; it participates in meaning.

Where Care Begins

At the edge of abstraction, intelligence turns toward what matters.

We began with a quest: to understand why our definitions of intelligence so often fall short. The answer, perhaps, is that intelligence was never a single thing to begin with. It is not a trait but a trajectory—not a fixed quantity, but a layered unfolding of response, adaptation, framing, and orientation.

By reducing it to metrics or tasks, we mistake performance for understanding, and simulation for meaning.

This broader lens doesn’t just expand our view of nature—it challenges how we design, deploy, and trust the systems we now call “intelligent.”

In an age of accelerating AI, this perspective is not philosophical indulgence. It is epistemic necessity.

What comes next—memory, attention, modeling, emotion, learning, consciousness—is not a ladder but a landscape. Intelligence doesn’t just deepen; it branches, refracts, and recombines. Across systems and species, it appears in varied and surprising forms. From emergence to aspect, from sensing to selfhood—there is more to discover. That, too, is part of its story.

For now, we pause at the threshold:

Where simulation ends,

care begins.

And intelligence becomes self-aware.

Excellent essay. Philosophically, I see echoes of Alfred North Whitehead's process philosophy, which he laid out in a profound but very difficult text _Process and Reality_. It presents an ontology that has place for the notions of interaction, constraint, and values. I had originally picked it up on Sanjoy Mitter's recommendation. It took me years to really understand and internalize what Whitehead was saying, and I keep coming back to it for more insights. In 1965, the physicist Johannes Burgers wrote a book called _Experience and Conceptual Activity_, where he connected Whitehead's philosophy to ideas in physics, including the principle of least action and the emergence of conservation laws and other constraints, and to ideas in biology and AI that were only emerging at the time he was writing it. Incidentally, one of the key notions in Whitehead's philosophy is coherence, which is more than logical consistency because it involves the notion of open systems interacting with their environments.